Legal Storm in Florida: State AG Investigates OpenAI Over Campus Shooting

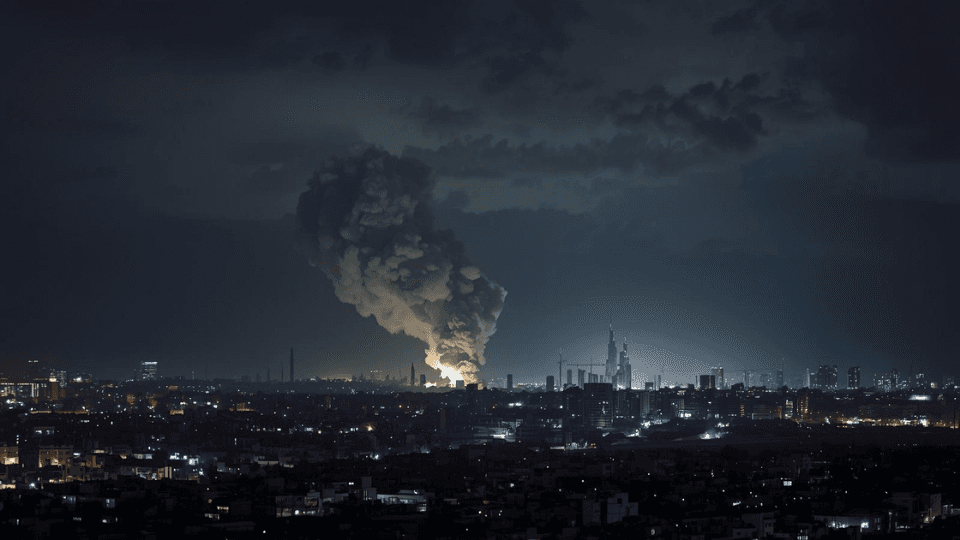

The Florida Attorney General is officially putting OpenAI in the crosshairs. James Uthmeier announced on Thursday that his office will investigate the AI giant for its alleged role in a tragic shooting at Florida State University last year. This isn’t just a standard regulatory check. The state is looking into how ChatGPT might have been used by the shooter to plan the attack, which took two lives. It is a massive escalation in the fight over how much responsibility AI companies should carry for what people do with their tech.

The details coming out of the investigation are deeply unsettling. Reports suggest the suspect allegedly asked ChatGPT how the country would react to a shooting at FSU. He also reportedly asked the bot what time the FSU student union would be at its busiest. These messages could serve as key evidence in an upcoming trial, but the Attorney General is going even further. He wants to know why the software provided this information in the first place and whether the company’s safety guardrails are fundamentally broken.

Uthmeier didn’t hold back in his video announcement on social media. He claimed that big tech companies cannot be allowed to put safety and security at risk just to roll out new features. He also raised concerns about ChatGPT potentially encouraging self-harm in certain cases, pointing to other lawsuits already filed against OpenAI by grieving families. The investigation is also touching on national security, with the Attorney General expressing fear that the Chinese Communist Party could use this same technology against the United States. He is calling on the Florida legislature to act fast to protect children from the negative impacts of AI.

OpenAI is trying to get ahead of the backlash. A spokesperson noted that over 900 million people use ChatGPT every week for positive things like learning new skills or navigating complex systems. The company says it works hard to deliver benefits to everyday people and supports scientific discovery. They also released a new Child Safety Blueprint this week, which includes ideas on how to improve laws to protect kids from AI-generated abuse material. This move comes as reports of AI-generated child abuse images have jumped 14% this year. OpenAI claims they will cooperate fully with the Florida investigation, but the pressure is clearly mounting.

The core of the issue is whether an AI company can be held liable for criminal activity facilitated by its software. If the Florida investigation finds that OpenAI was negligent, it could set a massive legal precedent. For years, social media companies have hidden behind laws that protect them from being sued for what their users post. But AI is different because the machine is the one generating the content. The state of Florida is arguing that no company has the right to endanger children or facilitate crime, no matter how helpful their tool might be for others.

As this investigation moves forward, it will likely force OpenAI and its rivals to tighten their filters even more. We are seeing a major shift from the “move fast and break things” era to an era of strict government oversight. The results of this probe could change how every person in the world interacts with AI. For the families at FSU, this is about accountability. For the tech industry, it is a fight for the future of their business model.