The High Cost of Non-Compliance: Why AI Oversight is No Longer Optional

Did you know that regulatory fines for data misuse and non-compliant AI systems are skyrocketing, often exceeding initial compliance costs by tenfold? The days of deploying AI tools without rigorous oversight are gone. Today, compliance isn’t a bottleneck, it’s a foundation for responsible innovation. Modern teams can’t afford to treat AI compliance as a separate legal function. They must integrate it directly into their development lifecycle, ensuring that legal guardrails accelerate, rather than inhibit, their ability to deliver powerful AI solutions.

Why “Compliance as Code” Is the New Development Standard

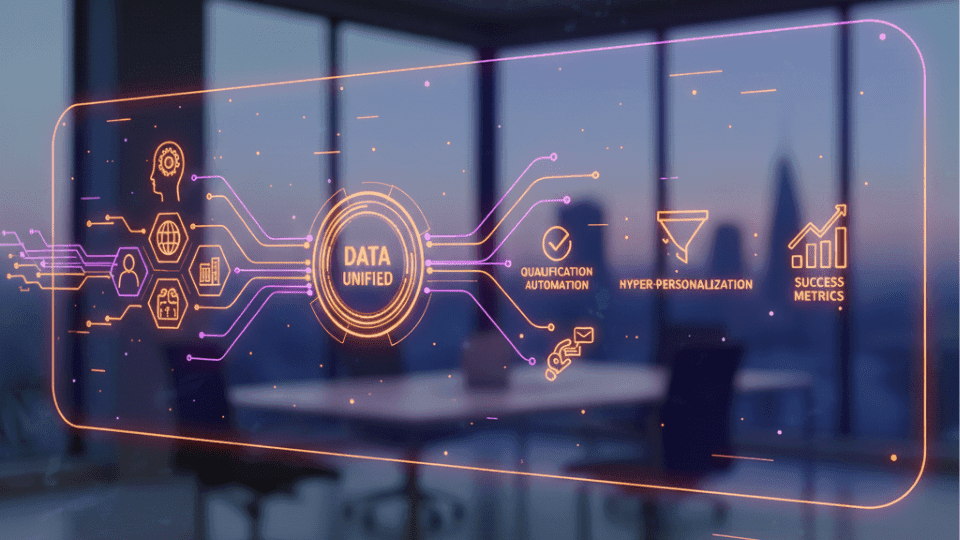

For many teams, compliance remains a reactive process: building a product, waiting for the legal review, and then scrambling to fix issues. This approach is slow, expensive, and fragile. The modern standard shifts to “Compliance as Code” (CaC), embedding regulatory requirements and ethical principles directly into the software development pipeline.

CaC allows developers to run automated checks for bias, data leakage, and regulatory adherence (like GDPR or the EU AI Act) at every stage, from initial model training to final deployment. This proactive integration prevents compliance issues from becoming major, costly roadblocks later on. It transforms legal requirements into measurable, executable code, making adherence a continuous, automated process.

Mapping Regulatory Risk to AI Functions

Effective AI compliance requires granular understanding, moving past broad concepts like “fairness.” Modern teams must map specific regulatory risks to specific AI functions within their system. A loan application scoring model faces different bias risks than an internal document summarization tool.

The key is identifying high-risk areas first. For example, if your AI makes decisions about finance, hiring, or healthcare, it falls under stricter non-discrimination laws. This dictates the choice of fairness metrics you use and the level of Explainable AI (XAI) required for auditability. Teams should categorize their AI tools based on their potential impact:

- Low-Risk: Content generation, internal search

- Medium-Risk: Personalized recommendations, marketing segmentation

- High-Risk: Automated hiring, credit scoring, medical diagnostics

Targeted compliance effort, proportional to the risk level, saves resources and ensures focused effort where it matters most.

The Crucial Role of Automated Monitoring and Auditability

A static compliance review at launch is insufficient. AI models drift over time. They encounter new data, and their performance can degrade or, critically, they can develop new biases. Achieving compliance requires automated, continuous monitoring.

Modern compliance platforms track model performance and ethical metrics in real-time, looking for deviations that signal potential non-compliance. This involves several automated checks:

- Drift Detection: Monitoring input data and model predictions for shifts that move the system outside of compliant boundaries.

- Adversarial Robustness: Testing the model against deliberate attempts to exploit vulnerabilities, such as prompt injection or data poisoning.

- Fairness Thresholds: Automatically alerting stakeholders if disparity metrics (e.g., acceptance rates across different demographic groups) exceed pre-defined, legally mandated thresholds.

These continuous checks create an unbroken audit trail, which is essential. You need to prove how and why your model made every decision, a core requirement of many emerging global regulations.

Cross-Functional Collaboration: Breaking Down Silos

In the past, AI teams operated in isolation, handing off a finished model to legal and compliance for a final stamp. This siloed approach fails in a rapidly evolving regulatory environment. Compliance is now a shared responsibility that demands collaboration across three key groups:

- Engineers and Data Scientists: They are responsible for implementing the Compliance as Code tools and integrating XAI techniques. They need training on ethical coding practices, not just technical deployment.

- Legal and Compliance Officers: They must translate vague regulatory texts into clear, executable technical requirements (e.g., “The model must not violate the Disparate Impact Ratio of 0.8”).

- Product Managers and Business Owners: They define the ethical use cases and provide the contextual understanding necessary to interpret monitoring alerts. They ensure business goals align with ethical outcomes.

By making compliance a shared, measurable KPI for all three groups, organizations ensure that AI governance is effective, practical, and fast.

Building Your AI Governance Roadmap

Successfully embedding compliance into your team’s DNA starts with a clear roadmap. Don’t attempt to overhaul your entire system at once. Start small, prove the concept, and then scale.

- Establish a Governance Framework: Define roles and responsibilities and create a documented policy outlining risk appetite and ethical commitments.

- Pilot XAI and Monitoring: Select one high-risk model and integrate a dashboard to monitor its fairness, robustness, and drift.

- Standardize Data Inventory: Document the source, usage, and known biases of all datasets feeding your AI models. You can’t comply if you don’t know your data’s lineage.

- Implement Automated Tooling: Integrate open-source or commercial CaC tools directly into your MLOps pipeline for automated pre-deployment checks.

This structured approach transforms compliance from a reactive burden into a competitive advantage. It builds trust with regulators and, more importantly, with your customers.

The Compliance Advantage

AI is rapidly changing the technological landscape, and regulators are swiftly following suit. Modern teams who embrace “Compliance as Code” and continuous monitoring aren’t just meeting minimum legal requirements, they are future-proofing their products and reputation. Compliance drives quality, stability, and trust. Will your team lead the charge in responsible AI deployment, or will you be scrambling to fix yesterday’s oversights?